5 May 2026

A Guide of Poor Decision Practices in Management for Tech Leaders and Founders

Most bad management decisions don't feel bad when you're making them. They even feel pragmatic. And informed. Sometimes even bold. You looked at what a competitor does, or what worked at your last company or simply what you've always believed about people and organizations - and you built a practice around that.

I've been reading a lot of research on this lately. Jeffrey Pfeffer and Robert Sutton spent years examining companies across industries, cataloguing the gap between what management thinks works and what the evidence actually shows. What they found wasn't surprising so much as uncomfortable: most organizations run on beliefs that circulate with the authority of fact, because nobody stops to ask why they work - or whether they do at all.

The catalogue of poor decision practices is large. Let's explore the ones that are most common, and most costly.

Casual Benchmarking: Copying Without Comprehension

There's nothing wrong with learning from others. The ability to transfer knowledge across contexts is one of the most efficient tools available to any leader. You don't need to burn your own runway to learn a lesson someone else already paid for.

The issue is that most benchmarking never gets past the surface. People copy what they can see - the vocabulary, the org chart, the perks list - and ignore the conditions that made any of it function. The visible stuff is almost never the load-bearing stuff.

Take the Spotify Squad Model (TL;DR Spotify organized into autonomous, cross-functional squads that functioned as mini-startups to maximize development speed, utilizing a matrix management system where product and design reported within the department while engineers were managed externally). Thousands of engineering organizations copied it, redrew their org charts with squads, tribes and guilds, put new labels on old teams, and called it a transformation. What they missed: the model was only ever aspirational at Spotify itself and never fully implemented (Source).

The co-author of the Spotify model and multiple agile coaches who worked there had been warning people not to copy it for years (Source). By the time most companies were implementing it, Spotify had already moved on. The structure required a specific combination of talent density, psychological safety infrastructure and deliberate cross-team coordination mechanisms that most imitators never built, because none of that made it into the blog post.

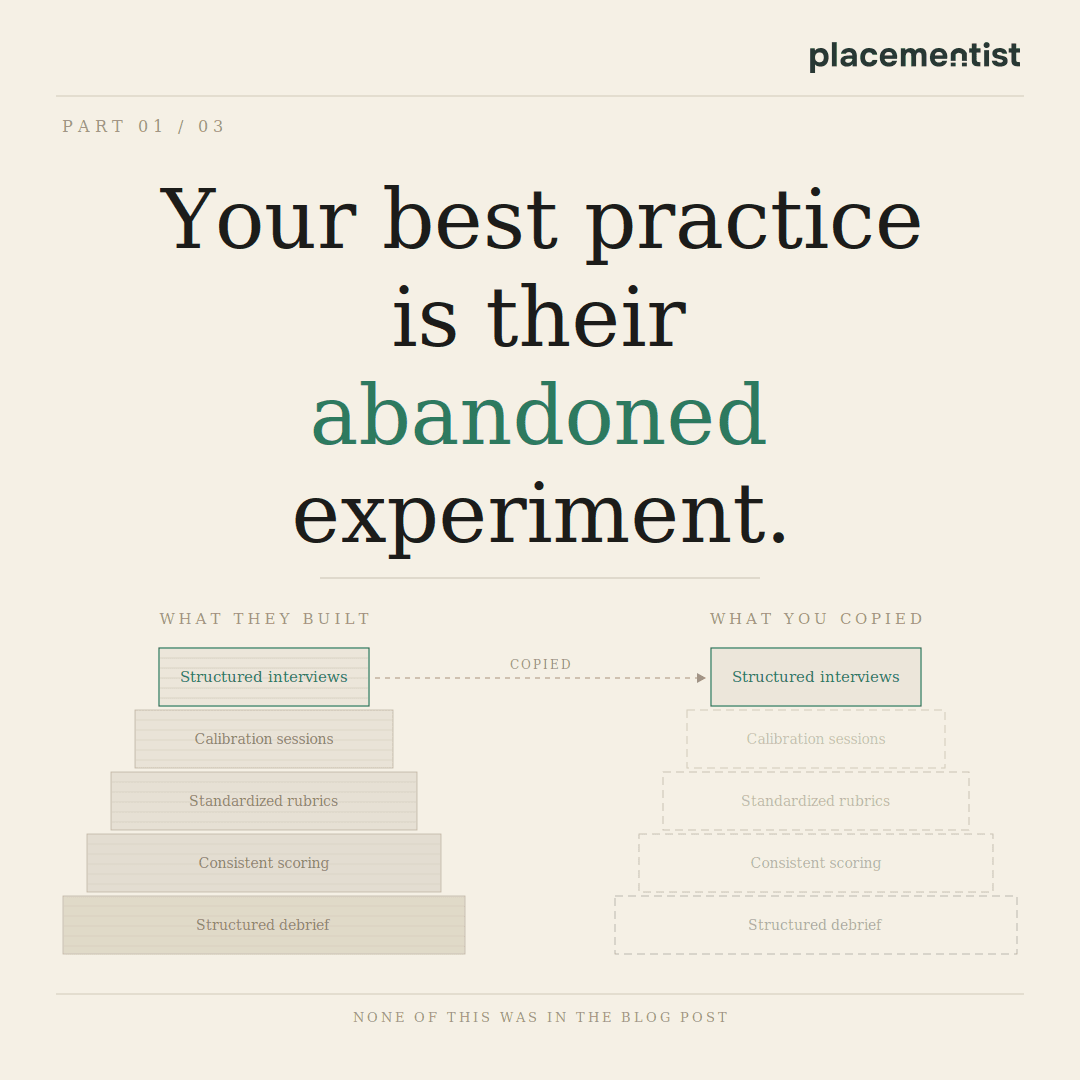

Hiring is where this gets expensive. The Google hiring process is the canonical example. For years, Google used brainteaser questions like "how many golf balls fit in a plane?" and the rest of the tech industry copied them, treating "cognitive shock value" as a proxy for intelligence. Then Google ran its own analysis. Laszlo Bock, Google's SVP of People Operations, concluded that brainteasers are "a complete waste of time" and "don't predict anything". (Source). They primarily made interviewers feel smart.

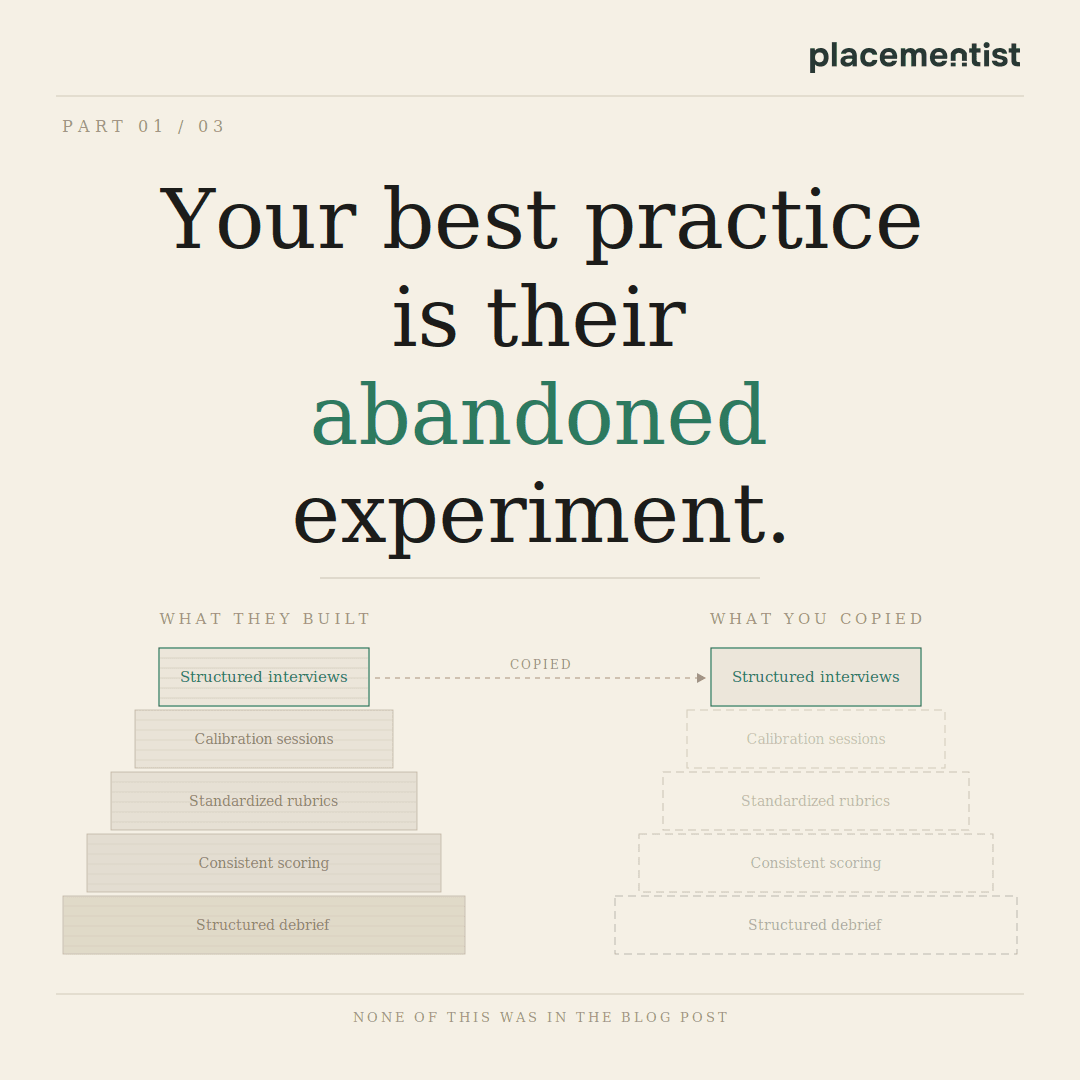

So Google pivoted to structured behavioral interviews with standardized rubrics. The industry copied those too, but without the rubrics and the calibration sessions. And the scoring consistency. Companies now run "structured interviews" where every interviewer asks different questions, scores candidates on different dimensions and still defers to whoever has the strongest opinion in the debrief.

This is how casual benchmarking works in practice: you observe the output, but not the system. And because different companies have fundamentally different business models, competitive dynamics and talent compositions, a practice that's structural in one context becomes decorative in another. Copying the practice without understanding the mechanism is how you get the cost without the result.

The logic behind why a practice works, and whether the conditions that make it work actually exist in your organization is almost never examined. Before you benchmark anything, the only honest questions are: what problem was this designed to solve, does that problem exist here, and are the underlying mechanisms present - or just the surface features that made it look like a good idea from the outside?

Doing What Seemed to Work Before

Past experience is valuable. It's also a trap.

The aphorism that nothing predicts future behavior better than past behavior is true for individuals in stable contexts. It breaks down badly when the situation has changed, when the original "learning" was actually a coincidence or when what you concluded from the experience was simply wrong.

Take the "Merit Pay" obsession. Many leaders who came up in high-intensity sales environments or investment banking conclude that aggressive, individual performance-based bonuses are the only way to drive excellence. Because it worked in a boiler-room sales floor, they apply it to product teams, engineers and creative directors.

The research tells a different story. A meta-analysis of over 100 years of research on incentives (Condly, S. J., Clark, R. E., & Stolovitch, H. D. (2003)) found that while individual "pay-for-performance" can increase productivity for simple, routine tasks, it often degrades performance for the kind of complex, collaborative work required in a modern startup. It encourages "silo" behavior, information hoarding and short-term gaming of metrics. But the logic of "it worked before, therefore it will work again" is so culturally embedded in executive identity that it's almost impossible to dislodge.

The fix isn't to abandon performance accountability, but to ask whether the incentive structure you're importing was designed for the type of work your team actually does.

The same applies to compensation design, go-to-market playbooks, product development cadence, org structures. A founding team that built a hit product with a small autonomous squad concludes that small autonomous squads are always the answer. They scale that model past the point where it stops working and wonder why output is collapsing.

One of the most common refrains I hear is: "Our best engineers came through referrals, so we’re doubling down on a referral-only strategy”. Referrals might produce good hires because of social accountability mechanisms and because your founding team's network is dense and high-trust. Scale the company past 50 people and that same channel starts delivering people who think like your current team, carry the same blind spots and have overlapping networks. Long after the point where you actually need cognitive diversity to solve new problems.

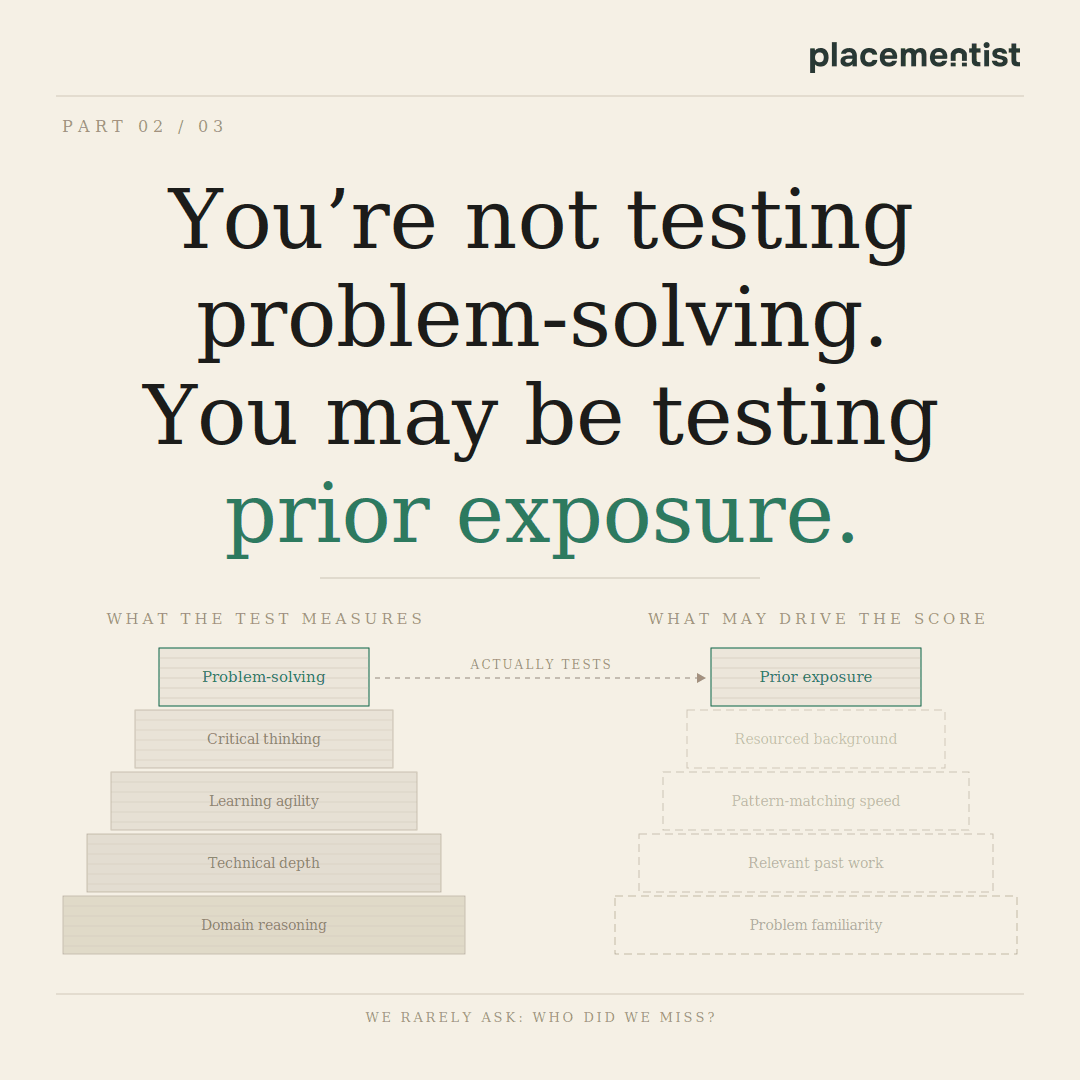

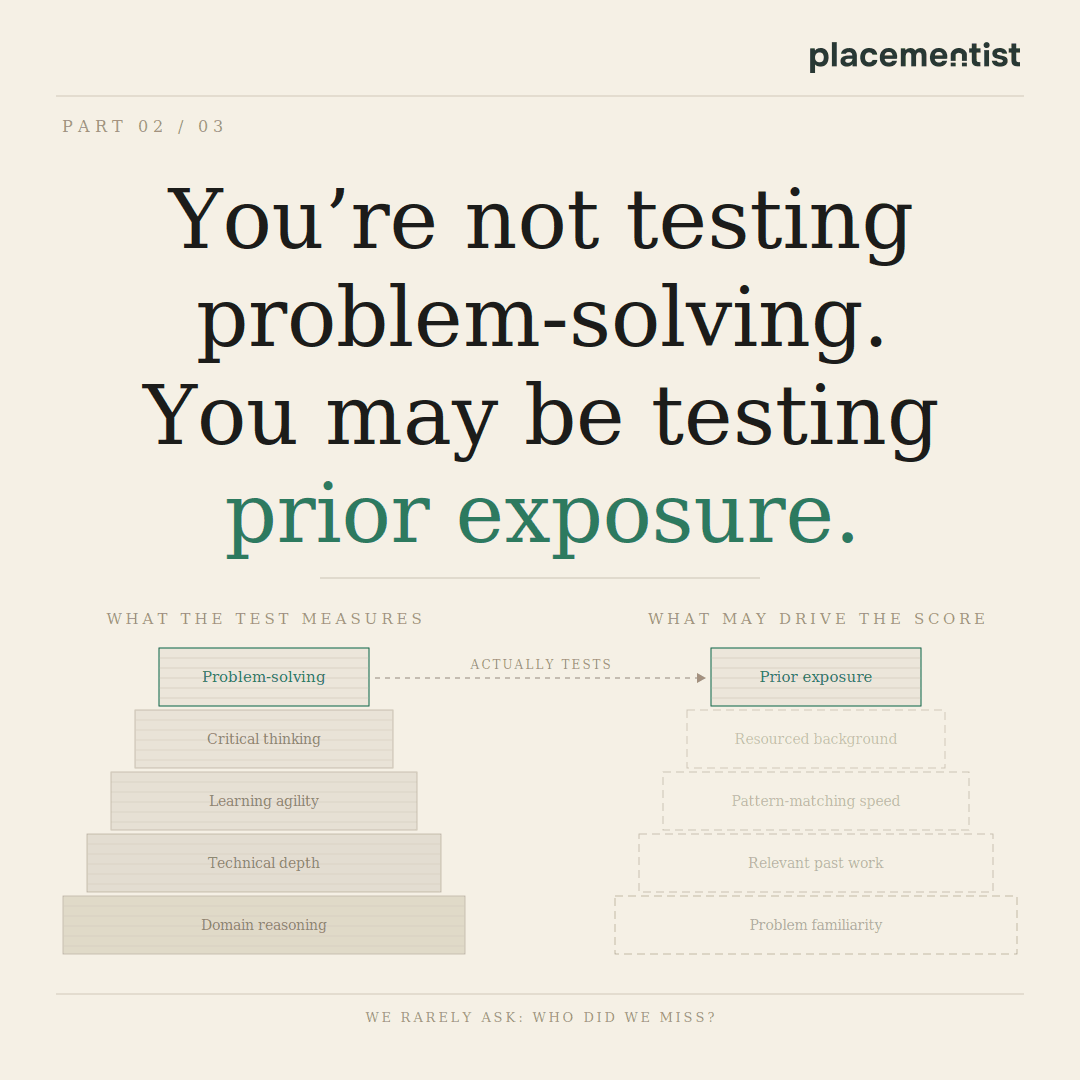

The same superstition applies to the “technical challenge”. Founders tell me: "We did a work sample last time and it weeded out the weak candidates”. Did it? Or did it just weed out the people who hadn't seen that specific problem before? We rarely ask about the counterfactual: Who did we miss?

What work samples actually measure is more conditional than most hiring managers assume. Roth, Bobko and McFarland (2005) found that once you correct for methodological errors in earlier studies, predictive validity is roughly a third lower than the benchmarks most practitioners cite. Separately - and this is a hypothesis worth testing in your own data - there's a plausible mechanism: candidates from well-resourced environments have often encountered your specific class of problem before. They pattern-match faster. That can look like exceptional ability when it's actually prior exposure. So it can be, that you aren't hiring the best problem-solver, but paying a premium for a person with the most relevant library of past solutions.

Before you let a past success dictate your next move, run it through the filter that Pfeffer and Sutton suggest:

Are you certain you're attributing success to the cause rather than something that happened despite it?

Is the new situation similar enough - same technology, same competitive dynamics, same people - that the old answer still applies?

Can you actually unpack why it worked? If not, you probably can't predict whether it will work again.

Following Unexamined Beliefs

The first two failure modes are at least correctable through better process. This one is harder, because the beliefs in question don't feel like assumptions, but like values. And values don't get audited the same way a process does. What makes ideologically-rooted beliefs so durable, as Pfeffer and Sutton document, is that contradictory evidence doesn't dislodge them - it gets explained away. The belief survives because questioning it feels like a threat to identity, not just to a practice.

Stock options became the dominant compensation mechanism in Silicon Valley not because the evidence supported them, but because a combination of ideology, tax law, accounting conventions and cultural momentum made them feel correct. The belief that equity creates alignment is intuitive, but research tells a more complicated story.

Studies from the National Bureau of Economic Research on executive compensation found that option schemes were often designed to serve managerial interests rather than align them with shareholders. More than 220 studies on equity ownership - across executives, directors, and institutional investors - showed no consistent relationship with firm financial performance (Dalton et al., 2003). And yet the belief persists.

Another example: first-mover advantage. Amazon wasn't the first company to sell books online. And Facebook wasn't the first social network. The belief in first-mover advantage is so pervasive that it shapes funding decisions, product roadmaps and competitive strategy - in companies where the evidence has been available for decades that execution and iteration matter far more than timing.

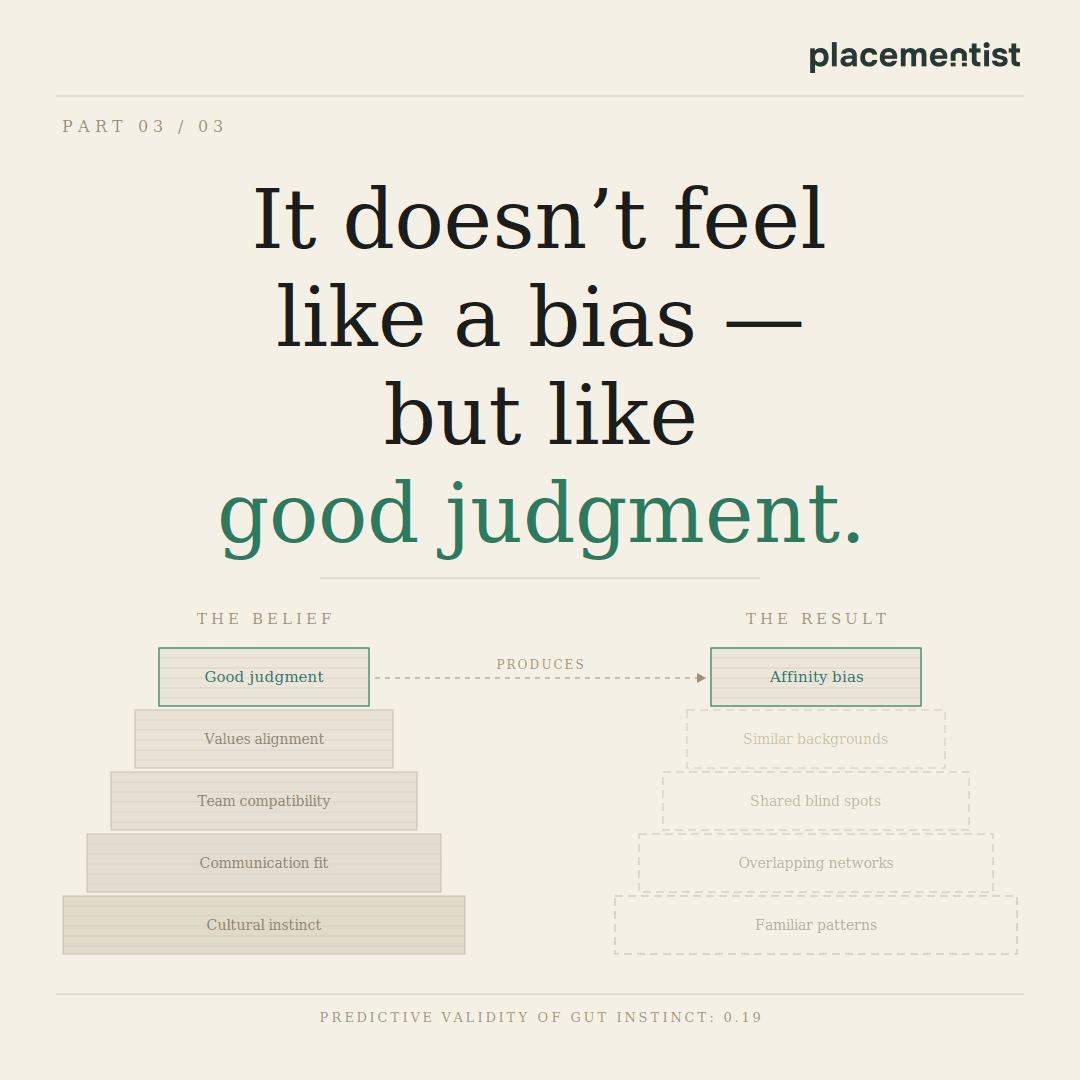

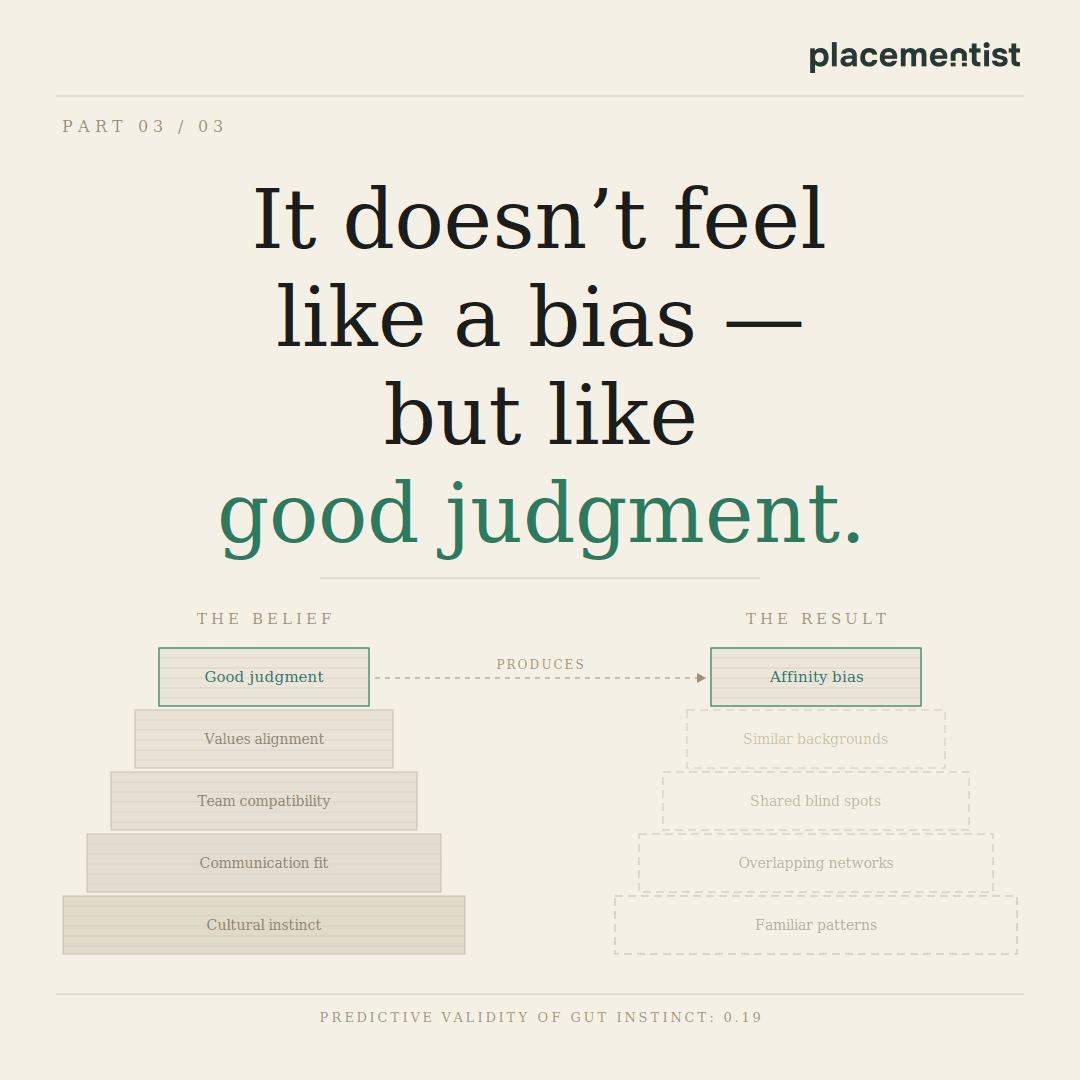

"Culture fit" is another canonical example. It starts as a reasonable heuristic - can this person operate effectively in this environment - and collapses quickly into affinity bias, meaning people hire people who remind them of themselves. The belief that gut instinct is a valid selection tool is similarly widespread and similarly unsupported: unstructured interviews predictive validity hover around 0.19 in predictive validity for job performance - barely better than chance in many conditions.

Beliefs rooted in ideology are "sticky” as Pfeffer and Sutton describe it. They resist contradictory data because the data threatens the identity of the person holding them.

Why Hiring Is Ground Zero for These Failures

Talent decisions are where all three failure modes converge with the highest organizational cost.

Casual benchmarking produces hiring processes that look professional without generating useful signal. Past-success reasoning produces evaluation criteria that made sense for the company you were three years ago. Unexamined beliefs produce filters that systematically exclude candidates who would outperform the ones you're selecting.

The stakes are not abstract. A mis-hire at a senior level can costs up to ~200% of annual salary once you factor in productivity loss, management overhead, team disruption and replacement costs. For a startup burning through runway, well, that’s a runway problem.

What would evidence-based hiring actually look like? It would start by asking what selection methods actually predict job performance, rather than which ones feel rigorous. It would build scorecards before interviews, not as a retrospective rationalization of a gut decision. It would track outcomes, what happened to the people you hired, and which signals in the process predicted their performance and use that data to update the process.

None of that requires a research team or a new software tool. Most companies just never do it.

Questions to Ask Before Your Next Decision

The following questions are adapted from Pfeffer and Sutton, applied to both general management and hiring specifically. Use them before deploying a practice you've borrowed or simply always believed in:

On benchmarking:

Do you understand why the practice works at the company you're copying - specifically, the mechanisms, culture, and conditions that make it effective there?

Would your CEO stake real money on the claim that the practice is producing results because of what you think, not despite something else happening at the same time?

What's the downside you're not seeing? Who walked away from the company you're benchmarking against because of this practice?

On past experience:

Is your current situation actually similar to the one where this worked before - same market dynamics, same team composition, same competitive environment?

Can you articulate the causal chain that connects the practice to the outcome? If not, you're extrapolating from a correlation you don't understand.

What evidence would change your mind? If the answer is "nothing" that's a belief, not a strategy.

On unexamined ideology:

Is your preference for this practice based on evidence - or on what fits your existing model of how organizations and people work?

Are you holding the same evidentiary standard for this decision that you'd hold for a product or financial decision?

Are you and your colleagues actively avoiding data that might complicate or contradict the current approach?

The Honest Closing

You don't have to run a research lab to make better decisions. But you do have to stop treating conviction as a substitute for evidence.

The most expensive organizational decisions - who to hire, who to promote, how to structure teams, which practices to scale - are also the ones made with the least rigor. Not because the leaders are careless, but because the organisational folklore is pretty convincing, the tools for doing better aren't promoted and nobody's tracking the outcomes systematically enough to know when they're wrong.

Start tracking outcomes. Build the feedback loop. Audit the practices you've never questioned. That's where the competitive advantage actually lives.

Ready to build your technical team with greater precision?

We help startups, scaleups, and enterprises reduce avoidable hiring costs and reallocate time and capital toward product, performance, and growth. Let’s discuss how placementist can bring scientific rigor to your technical hiring.

Graphics: Claude; Pixabay x Nanobanana

Most bad management decisions don't feel bad when you're making them. They even feel pragmatic. And informed. Sometimes even bold. You looked at what a competitor does, or what worked at your last company or simply what you've always believed about people and organizations - and you built a practice around that.

I've been reading a lot of research on this lately. Jeffrey Pfeffer and Robert Sutton spent years examining companies across industries, cataloguing the gap between what management thinks works and what the evidence actually shows. What they found wasn't surprising so much as uncomfortable: most organizations run on beliefs that circulate with the authority of fact, because nobody stops to ask why they work - or whether they do at all.

The catalogue of poor decision practices is large. Let's explore the ones that are most common, and most costly.

Casual Benchmarking: Copying Without Comprehension

There's nothing wrong with learning from others. The ability to transfer knowledge across contexts is one of the most efficient tools available to any leader. You don't need to burn your own runway to learn a lesson someone else already paid for.

The issue is that most benchmarking never gets past the surface. People copy what they can see - the vocabulary, the org chart, the perks list - and ignore the conditions that made any of it function. The visible stuff is almost never the load-bearing stuff.

Take the Spotify Squad Model (TL;DR Spotify organized into autonomous, cross-functional squads that functioned as mini-startups to maximize development speed, utilizing a matrix management system where product and design reported within the department while engineers were managed externally). Thousands of engineering organizations copied it, redrew their org charts with squads, tribes and guilds, put new labels on old teams, and called it a transformation. What they missed: the model was only ever aspirational at Spotify itself and never fully implemented (Source).

The co-author of the Spotify model and multiple agile coaches who worked there had been warning people not to copy it for years (Source). By the time most companies were implementing it, Spotify had already moved on. The structure required a specific combination of talent density, psychological safety infrastructure and deliberate cross-team coordination mechanisms that most imitators never built, because none of that made it into the blog post.

Hiring is where this gets expensive. The Google hiring process is the canonical example. For years, Google used brainteaser questions like "how many golf balls fit in a plane?" and the rest of the tech industry copied them, treating "cognitive shock value" as a proxy for intelligence. Then Google ran its own analysis. Laszlo Bock, Google's SVP of People Operations, concluded that brainteasers are "a complete waste of time" and "don't predict anything". (Source). They primarily made interviewers feel smart.

So Google pivoted to structured behavioral interviews with standardized rubrics. The industry copied those too, but without the rubrics and the calibration sessions. And the scoring consistency. Companies now run "structured interviews" where every interviewer asks different questions, scores candidates on different dimensions and still defers to whoever has the strongest opinion in the debrief.

This is how casual benchmarking works in practice: you observe the output, but not the system. And because different companies have fundamentally different business models, competitive dynamics and talent compositions, a practice that's structural in one context becomes decorative in another. Copying the practice without understanding the mechanism is how you get the cost without the result.

The logic behind why a practice works, and whether the conditions that make it work actually exist in your organization is almost never examined. Before you benchmark anything, the only honest questions are: what problem was this designed to solve, does that problem exist here, and are the underlying mechanisms present - or just the surface features that made it look like a good idea from the outside?

Doing What Seemed to Work Before

Past experience is valuable. It's also a trap.

The aphorism that nothing predicts future behavior better than past behavior is true for individuals in stable contexts. It breaks down badly when the situation has changed, when the original "learning" was actually a coincidence or when what you concluded from the experience was simply wrong.

Take the "Merit Pay" obsession. Many leaders who came up in high-intensity sales environments or investment banking conclude that aggressive, individual performance-based bonuses are the only way to drive excellence. Because it worked in a boiler-room sales floor, they apply it to product teams, engineers and creative directors.

The research tells a different story. A meta-analysis of over 100 years of research on incentives (Condly, S. J., Clark, R. E., & Stolovitch, H. D. (2003)) found that while individual "pay-for-performance" can increase productivity for simple, routine tasks, it often degrades performance for the kind of complex, collaborative work required in a modern startup. It encourages "silo" behavior, information hoarding and short-term gaming of metrics. But the logic of "it worked before, therefore it will work again" is so culturally embedded in executive identity that it's almost impossible to dislodge.

The fix isn't to abandon performance accountability, but to ask whether the incentive structure you're importing was designed for the type of work your team actually does.

The same applies to compensation design, go-to-market playbooks, product development cadence, org structures. A founding team that built a hit product with a small autonomous squad concludes that small autonomous squads are always the answer. They scale that model past the point where it stops working and wonder why output is collapsing.

One of the most common refrains I hear is: "Our best engineers came through referrals, so we’re doubling down on a referral-only strategy”. Referrals might produce good hires because of social accountability mechanisms and because your founding team's network is dense and high-trust. Scale the company past 50 people and that same channel starts delivering people who think like your current team, carry the same blind spots and have overlapping networks. Long after the point where you actually need cognitive diversity to solve new problems.

The same superstition applies to the “technical challenge”. Founders tell me: "We did a work sample last time and it weeded out the weak candidates”. Did it? Or did it just weed out the people who hadn't seen that specific problem before? We rarely ask about the counterfactual: Who did we miss?

What work samples actually measure is more conditional than most hiring managers assume. Roth, Bobko and McFarland (2005) found that once you correct for methodological errors in earlier studies, predictive validity is roughly a third lower than the benchmarks most practitioners cite. Separately - and this is a hypothesis worth testing in your own data - there's a plausible mechanism: candidates from well-resourced environments have often encountered your specific class of problem before. They pattern-match faster. That can look like exceptional ability when it's actually prior exposure. So it can be, that you aren't hiring the best problem-solver, but paying a premium for a person with the most relevant library of past solutions.

Before you let a past success dictate your next move, run it through the filter that Pfeffer and Sutton suggest:

Are you certain you're attributing success to the cause rather than something that happened despite it?

Is the new situation similar enough - same technology, same competitive dynamics, same people - that the old answer still applies?

Can you actually unpack why it worked? If not, you probably can't predict whether it will work again.

Following Unexamined Beliefs

The first two failure modes are at least correctable through better process. This one is harder, because the beliefs in question don't feel like assumptions, but like values. And values don't get audited the same way a process does. What makes ideologically-rooted beliefs so durable, as Pfeffer and Sutton document, is that contradictory evidence doesn't dislodge them - it gets explained away. The belief survives because questioning it feels like a threat to identity, not just to a practice.

Stock options became the dominant compensation mechanism in Silicon Valley not because the evidence supported them, but because a combination of ideology, tax law, accounting conventions and cultural momentum made them feel correct. The belief that equity creates alignment is intuitive, but research tells a more complicated story.

Studies from the National Bureau of Economic Research on executive compensation found that option schemes were often designed to serve managerial interests rather than align them with shareholders. More than 220 studies on equity ownership - across executives, directors, and institutional investors - showed no consistent relationship with firm financial performance (Dalton et al., 2003). And yet the belief persists.

Another example: first-mover advantage. Amazon wasn't the first company to sell books online. And Facebook wasn't the first social network. The belief in first-mover advantage is so pervasive that it shapes funding decisions, product roadmaps and competitive strategy - in companies where the evidence has been available for decades that execution and iteration matter far more than timing.

"Culture fit" is another canonical example. It starts as a reasonable heuristic - can this person operate effectively in this environment - and collapses quickly into affinity bias, meaning people hire people who remind them of themselves. The belief that gut instinct is a valid selection tool is similarly widespread and similarly unsupported: unstructured interviews predictive validity hover around 0.19 in predictive validity for job performance - barely better than chance in many conditions.

Beliefs rooted in ideology are "sticky” as Pfeffer and Sutton describe it. They resist contradictory data because the data threatens the identity of the person holding them.

Why Hiring Is Ground Zero for These Failures

Talent decisions are where all three failure modes converge with the highest organizational cost.

Casual benchmarking produces hiring processes that look professional without generating useful signal. Past-success reasoning produces evaluation criteria that made sense for the company you were three years ago. Unexamined beliefs produce filters that systematically exclude candidates who would outperform the ones you're selecting.

The stakes are not abstract. A mis-hire at a senior level can costs up to ~200% of annual salary once you factor in productivity loss, management overhead, team disruption and replacement costs. For a startup burning through runway, well, that’s a runway problem.

What would evidence-based hiring actually look like? It would start by asking what selection methods actually predict job performance, rather than which ones feel rigorous. It would build scorecards before interviews, not as a retrospective rationalization of a gut decision. It would track outcomes, what happened to the people you hired, and which signals in the process predicted their performance and use that data to update the process.

None of that requires a research team or a new software tool. Most companies just never do it.

Questions to Ask Before Your Next Decision

The following questions are adapted from Pfeffer and Sutton, applied to both general management and hiring specifically. Use them before deploying a practice you've borrowed or simply always believed in:

On benchmarking:

Do you understand why the practice works at the company you're copying - specifically, the mechanisms, culture, and conditions that make it effective there?

Would your CEO stake real money on the claim that the practice is producing results because of what you think, not despite something else happening at the same time?

What's the downside you're not seeing? Who walked away from the company you're benchmarking against because of this practice?

On past experience:

Is your current situation actually similar to the one where this worked before - same market dynamics, same team composition, same competitive environment?

Can you articulate the causal chain that connects the practice to the outcome? If not, you're extrapolating from a correlation you don't understand.

What evidence would change your mind? If the answer is "nothing" that's a belief, not a strategy.

On unexamined ideology:

Is your preference for this practice based on evidence - or on what fits your existing model of how organizations and people work?

Are you holding the same evidentiary standard for this decision that you'd hold for a product or financial decision?

Are you and your colleagues actively avoiding data that might complicate or contradict the current approach?

The Honest Closing

You don't have to run a research lab to make better decisions. But you do have to stop treating conviction as a substitute for evidence.

The most expensive organizational decisions - who to hire, who to promote, how to structure teams, which practices to scale - are also the ones made with the least rigor. Not because the leaders are careless, but because the organisational folklore is pretty convincing, the tools for doing better aren't promoted and nobody's tracking the outcomes systematically enough to know when they're wrong.

Start tracking outcomes. Build the feedback loop. Audit the practices you've never questioned. That's where the competitive advantage actually lives.

Ready to build your technical team with greater precision?

We help startups, scaleups, and enterprises reduce avoidable hiring costs and reallocate time and capital toward product, performance, and growth. Let’s discuss how placementist can bring scientific rigor to your technical hiring.

Graphics: Claude; Pixabay x Nanobanana

Latest Articles

5 May 2026

A Guide of Poor Decision Practices in Management for Tech Leaders and Founders

5 May 2026

A Guide of Poor Decision Practices in Management for Tech Leaders and Founders

17 March 2026

The Developer’s Guide to Secure Job Hunting in 2026

17 March 2026

The Developer’s Guide to Secure Job Hunting in 2026

11 March 2026

The Evidence-Based Guide to Candidate Attraction

11 March 2026

The Evidence-Based Guide to Candidate Attraction

Ready to build your technical team?

Let's discuss your hiring needs and how evidence-based recruitment might help

Ready to build your technical team?

Let's discuss your hiring needs and how evidence-based recruitment might help

Ready to build your technical team?

Let's discuss your hiring needs and how evidence-based recruitment

might help.

Ready to build your technical team?

Let's discuss your hiring needs and how evidence-based recruitment might help

Quick links

Quick links

Quick links

Quick links